WASHINGTON, D.C. — Nearly every parent’s biggest worry is how to provide for the health, safety and welfare of their children. It’s a concern that takes many forms: getting them to school safely and back again, serving them the best food and housing them safely. More and more, knowing — really, not knowing — what their child may be doing online is a key worry.

Others are beginning to listen.

Congress is one important group paying attention — and could be taking action before the current term ends in December. There are proposals to create a new privacy division at the Federal Trade Commission, expand federal protections for children’s data, fund government research into kids’ mental health and urge companies to act in the “best interest of the child.”

And what Congress can’t or won’t do, the FTC itself can try to launch on its own — everything except the funding part, that is.

Two years ago, the FTC demanded that nine social media companies including Facebook (now Meta), Twitter and YouTube hand over information about how they collect and use personal data, including “how their practices affect children and teens.” Were it to launch a formal study, the FTC could compel firms to disclose what has been closely held data.

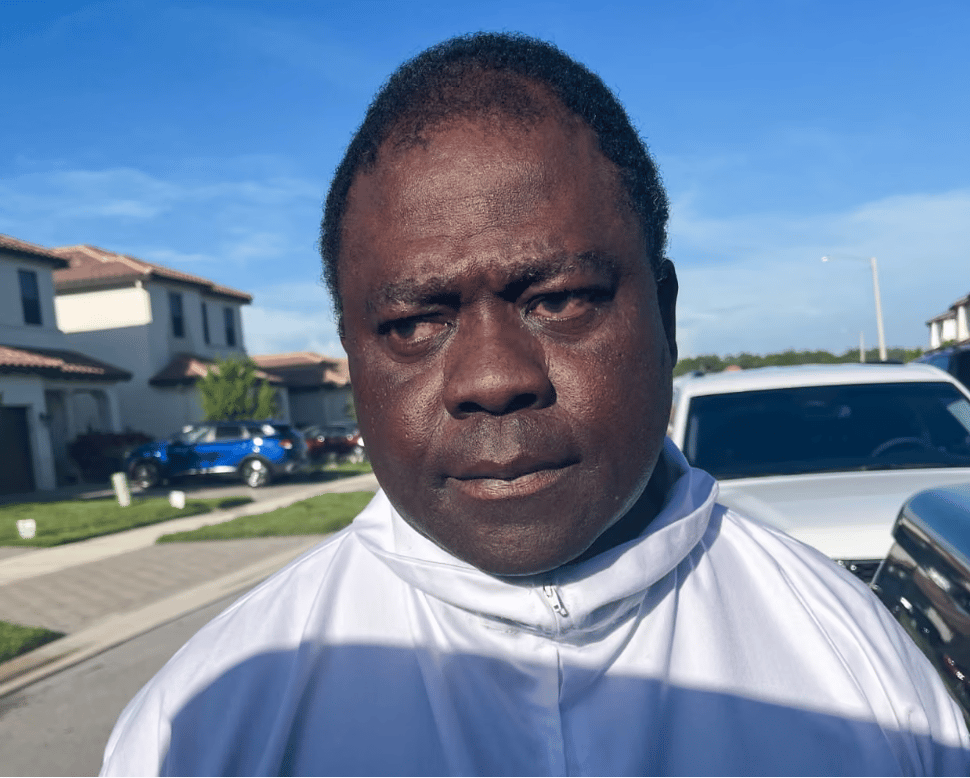

The FTC’s newest commission, Omar Bedoya, told The Washington Post in an interview posted Aug. 25 that the protection of children is one of the FTC’s policy areas where there is bipartisanship. Bedoya’s confirmation by the Senate now gives Democratic appointees a 3-2 majority on the FTC.

Usually, that is. The FTC voted in August to consider new rules concerning “lax data security or commercial surveillance practices.” The vote was 3-2 along partisan lines.

Social media giants, though, are paying attention themselves to the potential harms of their services to young people.

Snapchat announced in August the introduction of parental controls. In order to activate them, parents have to get their own Snapchat accounts and their children must agree to the controls.

The controls will let parents see their teenagers’ friends with on the app and who they had communicated with in the previous seven days, according to a blog posting by Snapchat’s parent company, Snap, in announcing the controls.

While parents will be able to report accounts that their children are friends with if they violate Snapchat’s policies, they will not be able to see their children’s conversations.

“Our goal was to create a set of tools designed to reflect the dynamics of real-world relationships and foster collaboration and trust between parents and teens,” the blog posting said.

The availability of parental controls is not all-encompassing. Some features aren’t ready to be introduced yet, according to Snap.

Moreover, the controls are currently available in only five countries: the United States, Britain, Canada, Australia and New Zealand. Other countries, Snap said, will gain access to the parental controls come autumn.

Snap is just one company feeling heat from politicians, regulators and investors to improve their products and services or face unwanted consequences.

In England, the Government Communications Headquarters and the U.K.’s National Cyber Security Centre, made a joint declaration in July calling for “client-side scanning” to keep child sexual abuse material off phones and other devices.

Apple has a version of the client-side scanning system, which would, for the first time from any major platform, scan photos on the users’ hardware, rather than waiting for them to be uploaded to the company’s servers.

Some civil libertarians in Britain pushed back vehemently on this recommendation, saying that tech giants with the capability to do this could also use that capacity to surveil anyone’s digital life, be they online or off.

But the 70-page joint GCHQ-NCSC suggests that databases of images be assembled by child protection groups around the world — such as the National Center for Missing and Exploited Children in the United States — be kept as comprehensive as possible, but that the scanning database can be made only of those images in all groups’ lists.

They can then publish a “hash,” a cryptographic signature, of that database when they hand it over to tech companies, who can show the same hash when it is loaded on to people’s cellphones. Even if China, for instance, forced Apple to load a different database for China, then the hash would change accordingly, and users would know that the system was no longer trustworthy.

The paper does not admit that this is the best solution, but only that a solution — and the technology to apply it — exists now and can be used until something better comes along.